Enterprising Minecraft

Phase 1

2026

2026

Cloud Infrastructure

Cloud Infrastructure

Kubernetes

Kubernetes

What started as a simple Minecraft server became a project dedicated to Kubernetes expertise. I migrated a traditional standalone server into a production-grade Kubernetes cluster, proving that complex infrastructure concepts can be learned through hands-on practice.

Process

Process

01

The Challenge

My home-hosted server was hitting the limits of traditional infrastructure:

Players experienced lag when moving between world1 and world2 as both competed for the same CPU resources.

Every update meant 12+ minutes of downtime—shutting down the server, implementing changes, and waiting for restart.

I needed enterprise-grade capabilities: zero-downtime updates, resource isolation, and the ability to scale individual components without affecting the entire system.

01

The Challenge

My home-hosted server was hitting the limits of traditional infrastructure:

Players experienced lag when moving between world1 and world2 as both competed for the same CPU resources.

Every update meant 12+ minutes of downtime—shutting down the server, implementing changes, and waiting for restart.

I needed enterprise-grade capabilities: zero-downtime updates, resource isolation, and the ability to scale individual components without affecting the entire system.

01

The Challenge

My home-hosted server was hitting the limits of traditional infrastructure:

Players experienced lag when moving between world1 and world2 as both competed for the same CPU resources.

Every update meant 12+ minutes of downtime—shutting down the server, implementing changes, and waiting for restart.

I needed enterprise-grade capabilities: zero-downtime updates, resource isolation, and the ability to scale individual components without affecting the entire system.

02

Learning & Research

I invested two months mastering Kubernetes fundamentals through hands-on study with The Kubernetes Book by Nigel Poulton, diving deep into concepts I'd never encountered before. Coming from zero container experience, I had to learn Docker, YAML configuration, VSCode, Linode, Github, Linux, and the entire Kubernetes ecosystem from scratch.

Key focus areas included StatefulSets for persistent applications, resource management, and CI/CD pipelines—all critical for transforming a traditional server into a cloud-native deployment.

02

Learning & Research

I invested two months mastering Kubernetes fundamentals through hands-on study with The Kubernetes Book by Nigel Poulton, diving deep into concepts I'd never encountered before. Coming from zero container experience, I had to learn Docker, YAML configuration, VSCode, Linode, Github, Linux, and the entire Kubernetes ecosystem from scratch.

Key focus areas included StatefulSets for persistent applications, resource management, and CI/CD pipelines—all critical for transforming a traditional server into a cloud-native deployment.

02

Learning & Research

I invested two months mastering Kubernetes fundamentals through hands-on study with The Kubernetes Book by Nigel Poulton, diving deep into concepts I'd never encountered before. Coming from zero container experience, I had to learn Docker, YAML configuration, VSCode, Linode, Github, Linux, and the entire Kubernetes ecosystem from scratch.

Key focus areas included StatefulSets for persistent applications, resource management, and CI/CD pipelines—all critical for transforming a traditional server into a cloud-native deployment.

03

Architecture & Decisions

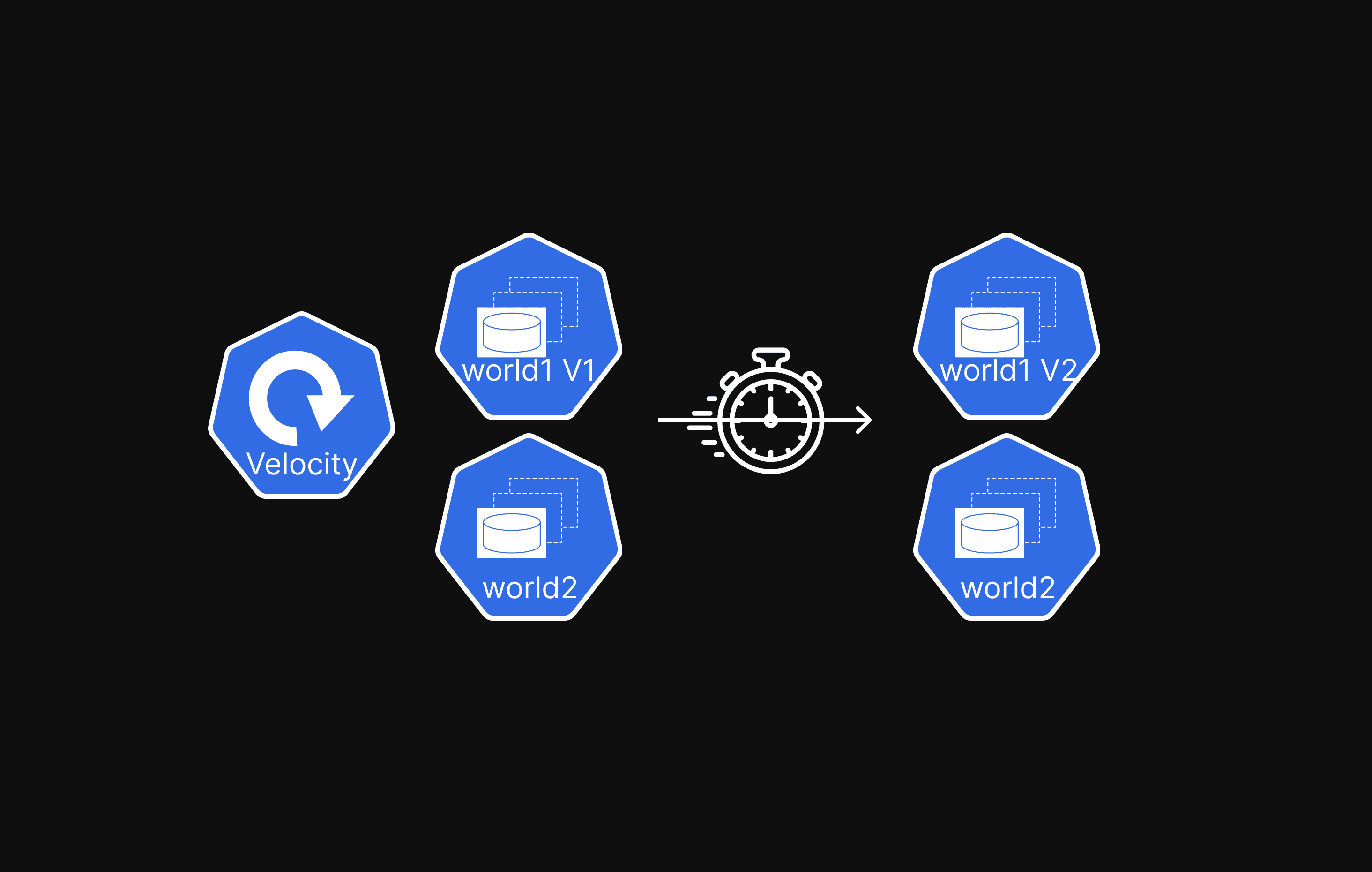

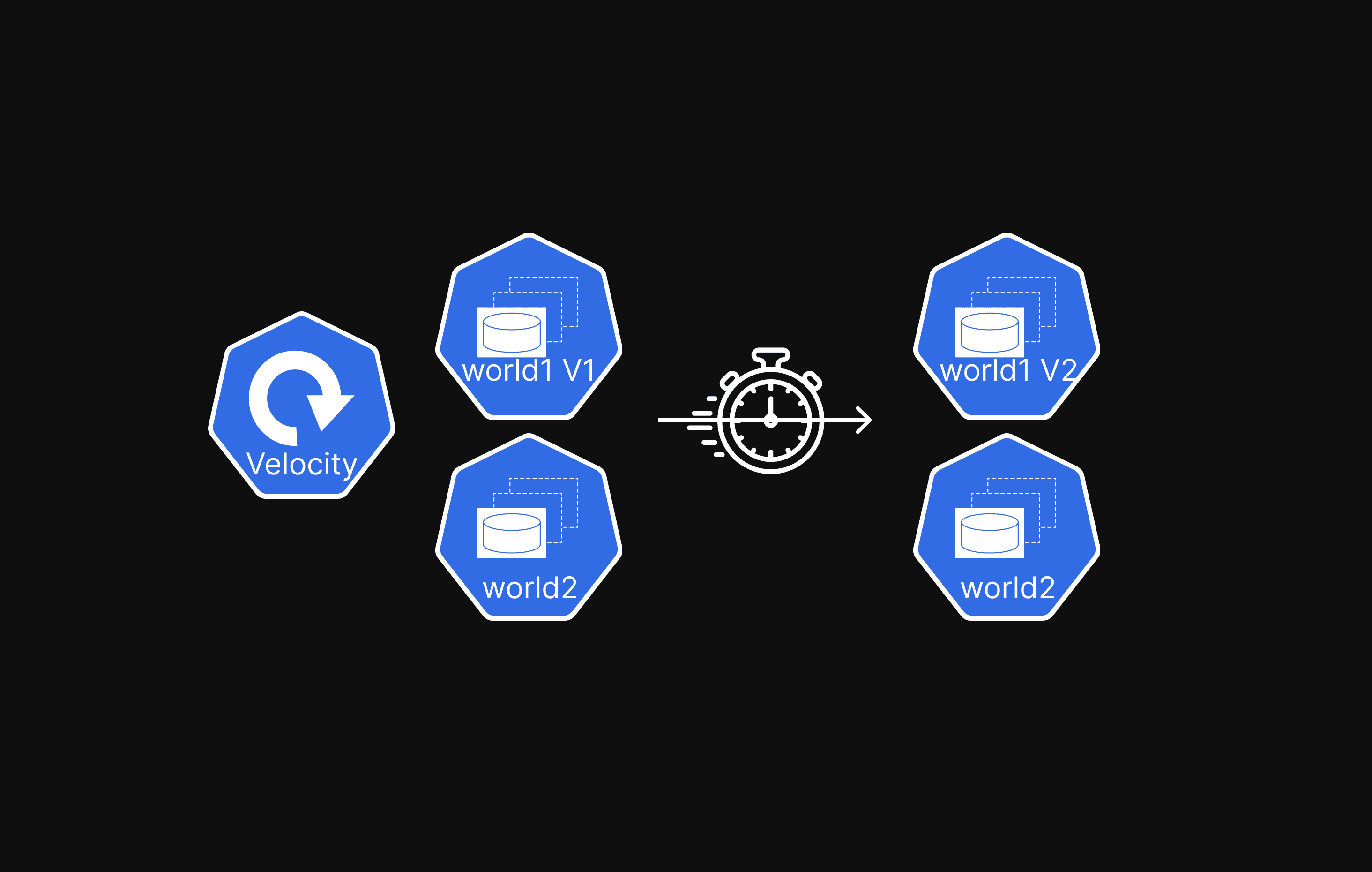

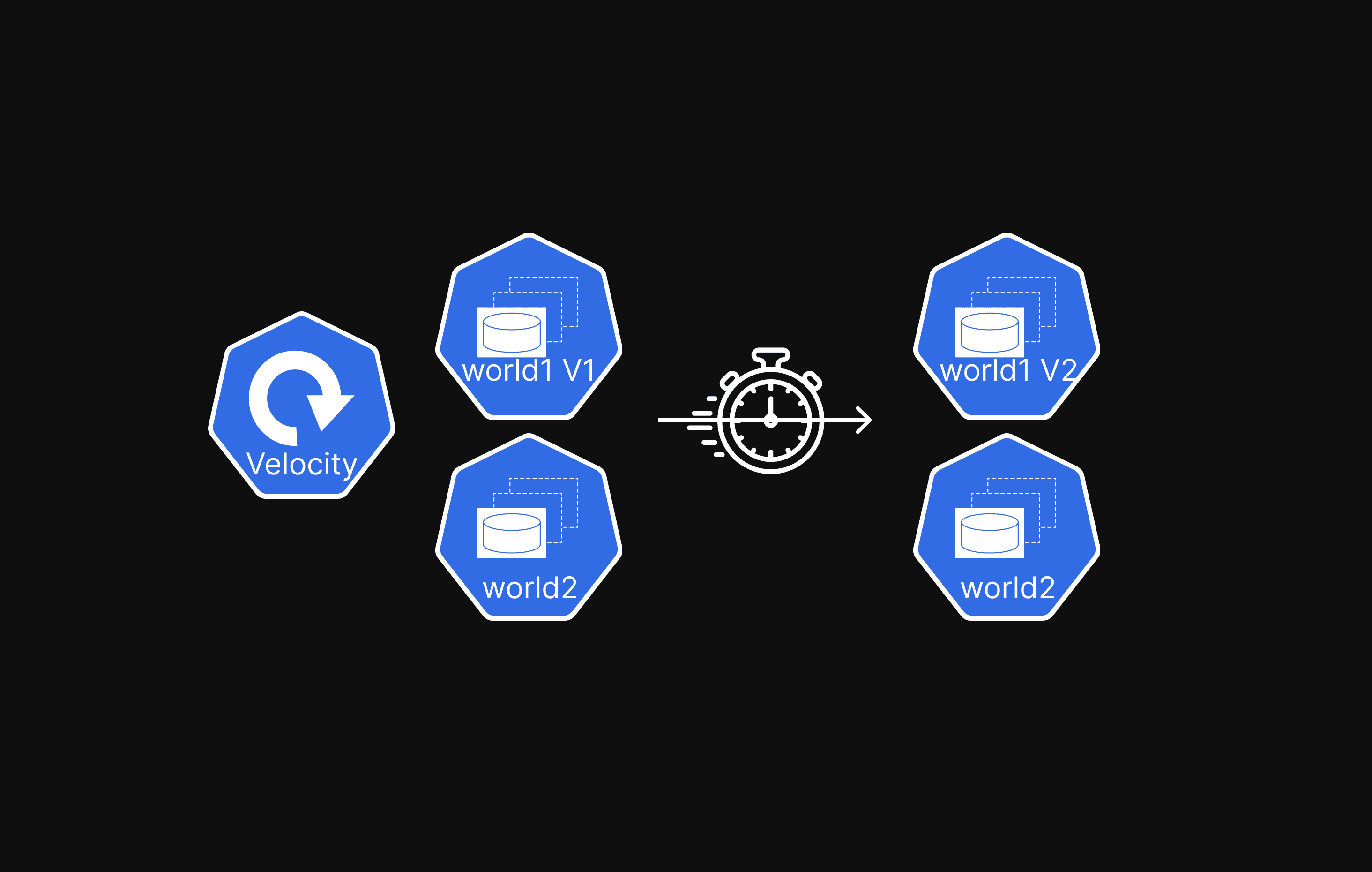

I designed a microservices architecture separating world1 and world2 into dedicated servers behind a Velocity proxy for seamless player switching.

Key decisions included:

Choosing StatefulSets over Deployments for persistent world data

Implementing resource isolation (8GB for world1, 3GB for world2, 1GB for proxy)

Building a complete CI/CD pipeline with GitHub Actions.

I opted for trusted ITZG container images over building custom ones

Focusing hands-on learning time on Kubernetes orchestration rather than container development.

03

Architecture & Decisions

I designed a microservices architecture separating world1 and world2 into dedicated servers behind a Velocity proxy for seamless player switching.

Key decisions included:

Choosing StatefulSets over Deployments for persistent world data

Implementing resource isolation (8GB for world1, 3GB for world2, 1GB for proxy)

Building a complete CI/CD pipeline with GitHub Actions.

I opted for trusted ITZG container images over building custom ones

Focusing hands-on learning time on Kubernetes orchestration rather than container development.

03

Architecture & Decisions

I designed a microservices architecture separating world1 and world2 into dedicated servers behind a Velocity proxy for seamless player switching.

Key decisions included:

Choosing StatefulSets over Deployments for persistent world data

Implementing resource isolation (8GB for world1, 3GB for world2, 1GB for proxy)

Building a complete CI/CD pipeline with GitHub Actions.

I opted for trusted ITZG container images over building custom ones

Focusing hands-on learning time on Kubernetes orchestration rather than container development.

05

Implementation & Process

Over three weeks of iterative development, I built the complete Kubernetes deployment using VSCode, K3s, and GitHub Actions. The process involved:

Creating YAML manifests for StatefulSets, services, and persistent volumes

Implementing a self-hosted GitHub Actions runner for automated deployments

After the first week's build failed, I rebuilt the entire system from scratch

Applying lessons learned about resource allocation and configuration management

The organized file structure separated namespaces, configs, secrets, and storage—demonstrating proper DevOps practices.

05

Implementation & Process

Over three weeks of iterative development, I built the complete Kubernetes deployment using VSCode, K3s, and GitHub Actions. The process involved:

Creating YAML manifests for StatefulSets, services, and persistent volumes

Implementing a self-hosted GitHub Actions runner for automated deployments

After the first week's build failed, I rebuilt the entire system from scratch

Applying lessons learned about resource allocation and configuration management

The organized file structure separated namespaces, configs, secrets, and storage—demonstrating proper DevOps practices.

05

Implementation & Process

Over three weeks of iterative development, I built the complete Kubernetes deployment using VSCode, K3s, and GitHub Actions. The process involved:

Creating YAML manifests for StatefulSets, services, and persistent volumes

Implementing a self-hosted GitHub Actions runner for automated deployments

After the first week's build failed, I rebuilt the entire system from scratch

Applying lessons learned about resource allocation and configuration management

The organized file structure separated namespaces, configs, secrets, and storage—demonstrating proper DevOps practices.

06

Deployment & Results

The production deployment achieved dramatic operational improvements:

Reduced update downtime from 12+ minutes to under 30 seconds through rolling updates

Players now experience zero lag as dedicated resource allocation prevents CPU conflicts

The Velocity proxy enables seamless server switching while individual servers can be updated independently

After resolving initial memory allocation issues using kubectl logs for diagnosis, the system has maintained excellent performance and stability

06

Deployment & Results

The production deployment achieved dramatic operational improvements:

Reduced update downtime from 12+ minutes to under 30 seconds through rolling updates

Players now experience zero lag as dedicated resource allocation prevents CPU conflicts

The Velocity proxy enables seamless server switching while individual servers can be updated independently

After resolving initial memory allocation issues using kubectl logs for diagnosis, the system has maintained excellent performance and stability

06

Deployment & Results

The production deployment achieved dramatic operational improvements:

Reduced update downtime from 12+ minutes to under 30 seconds through rolling updates

Players now experience zero lag as dedicated resource allocation prevents CPU conflicts

The Velocity proxy enables seamless server switching while individual servers can be updated independently

After resolving initial memory allocation issues using kubectl logs for diagnosis, the system has maintained excellent performance and stability

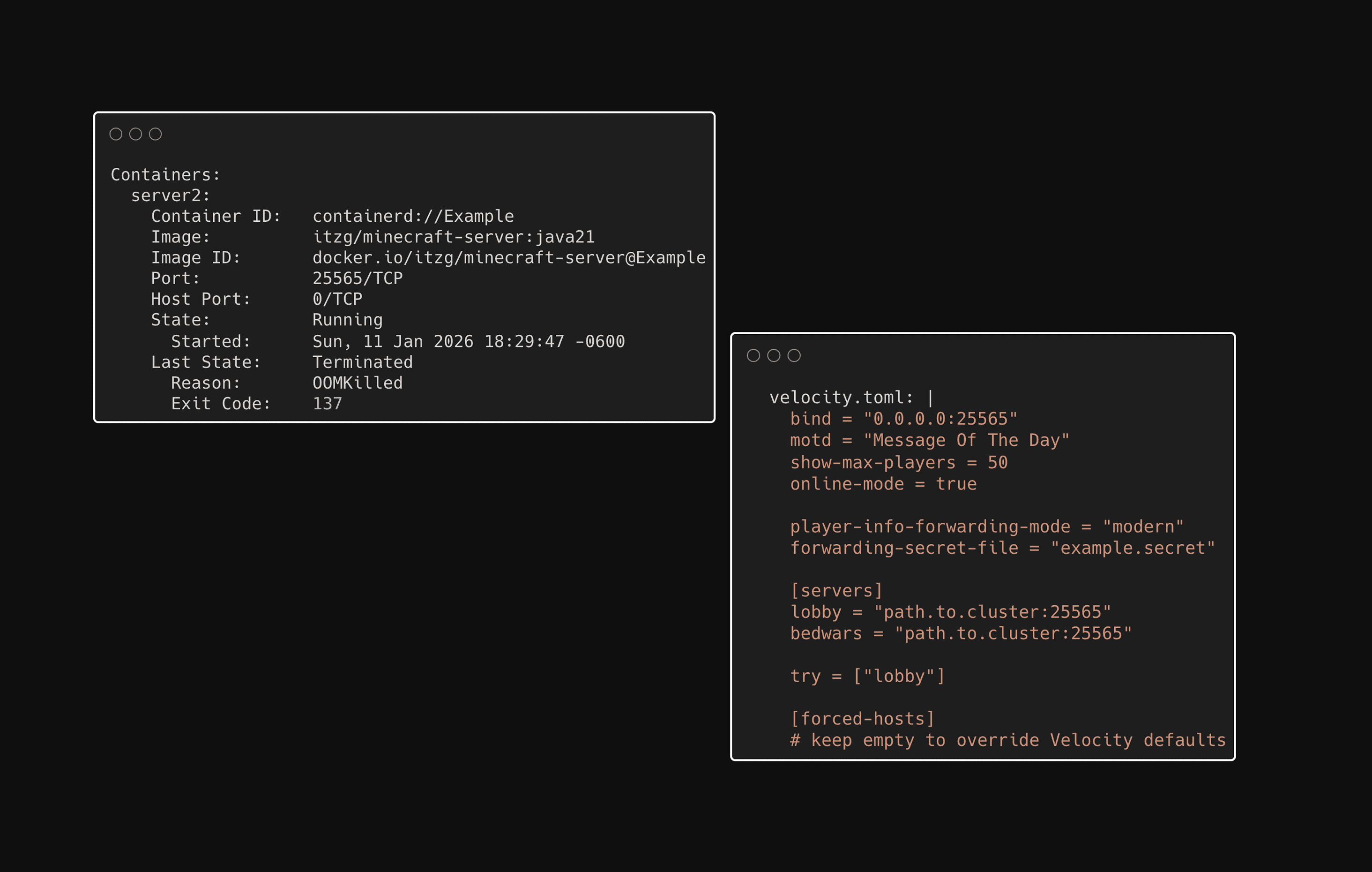

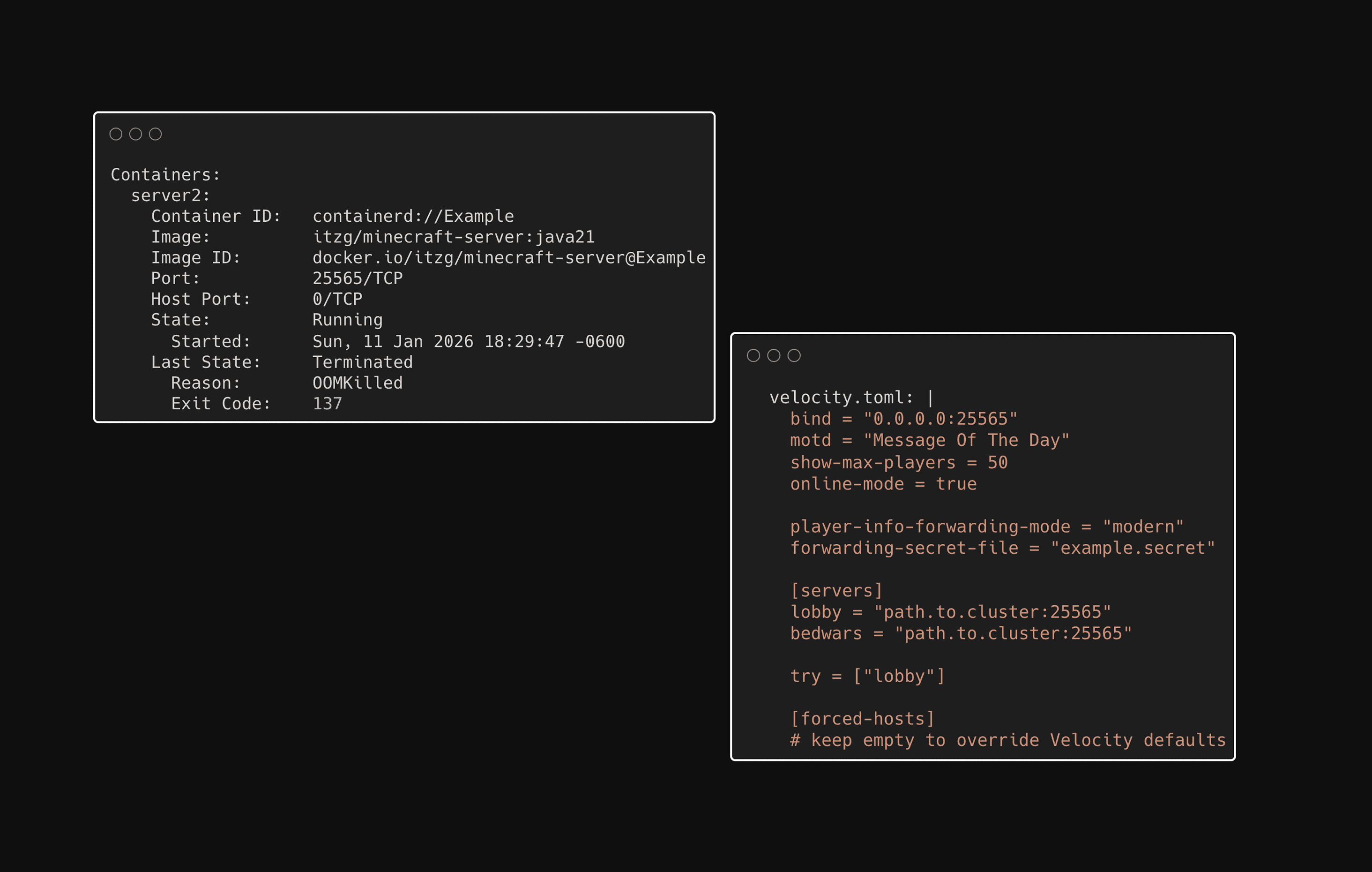

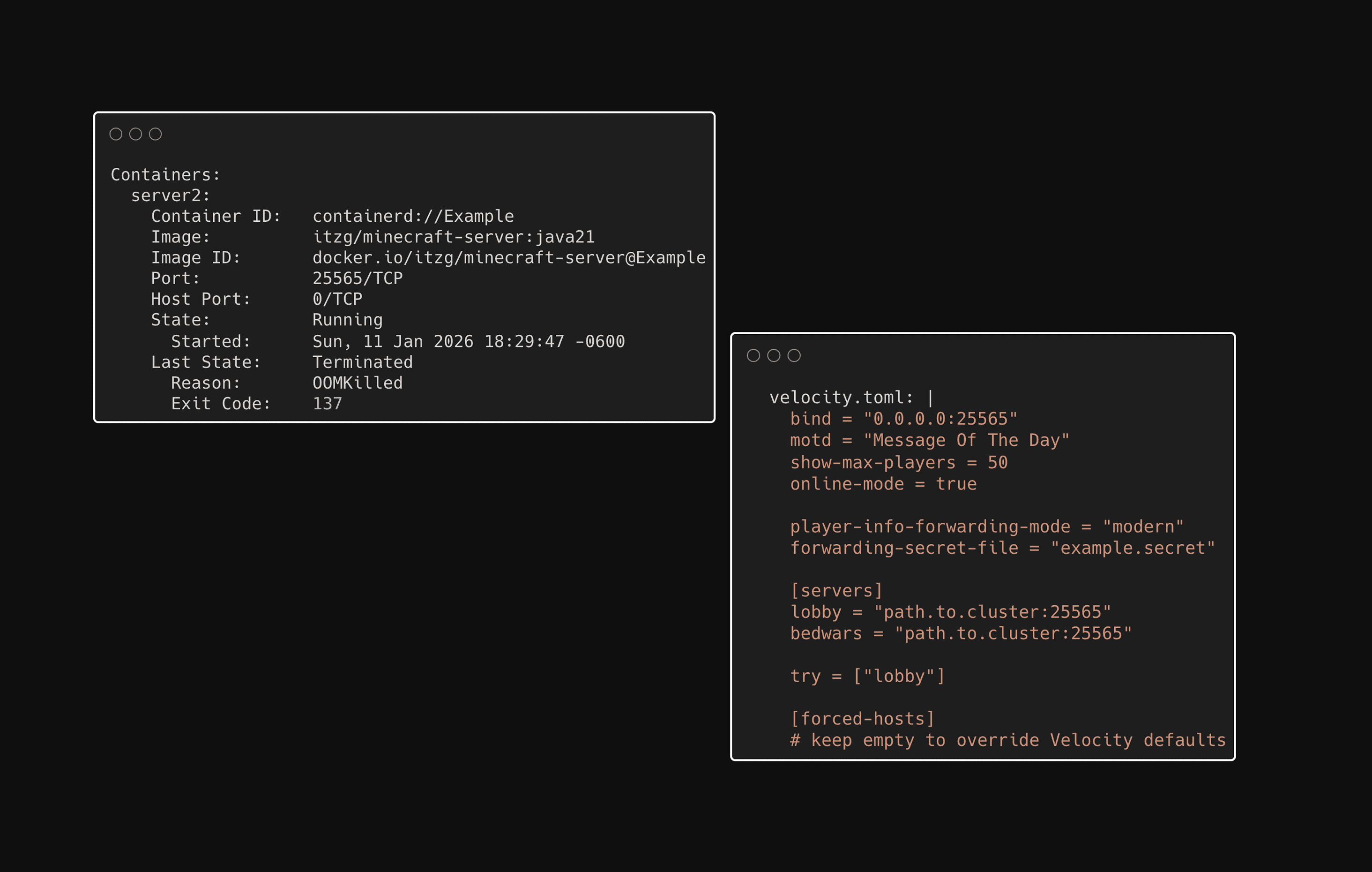

07

Problem Solving

Real-world deployment brought unexpected challenges that required systematic troubleshooting.

The Velocity proxy failed continuously for a week until I discovered the configuration required an empty [forced-hosts] section in the ConfigMap—a subtle requirement buried in the documentation.

Server2 kept crashing due to memory exhaustion, diagnosed using kubectl logs to identify resource allocation issues.

These debugging experiences taught me the importance of thorough documentation review and proper Kubernetes resource monitoring.

07

Problem Solving

Real-world deployment brought unexpected challenges that required systematic troubleshooting.

The Velocity proxy failed continuously for a week until I discovered the configuration required an empty [forced-hosts] section in the ConfigMap—a subtle requirement buried in the documentation.

Server2 kept crashing due to memory exhaustion, diagnosed using kubectl logs to identify resource allocation issues.

These debugging experiences taught me the importance of thorough documentation review and proper Kubernetes resource monitoring.

07

Problem Solving

Real-world deployment brought unexpected challenges that required systematic troubleshooting.

The Velocity proxy failed continuously for a week until I discovered the configuration required an empty [forced-hosts] section in the ConfigMap—a subtle requirement buried in the documentation.

Server2 kept crashing due to memory exhaustion, diagnosed using kubectl logs to identify resource allocation issues.

These debugging experiences taught me the importance of thorough documentation review and proper Kubernetes resource monitoring.

08

Future Expansion

This foundation sets the stage for "Enterprising Minecraft - Phase 2," where I'll implement enterprise-grade security and automation. Planned enhancements include:

Hardening security with container image scanning

Building infrastructure as code with Terraform for reproducible deployments

Exploring multi-region strategies for high availability

Migration to managed Kubernetes services like EKS and GKE to understand serverless orchestration

Implementing automated cloud backup solutions

Each advancement deepens my cloud-native security expertise—directly applicable to enterprise environments.

08

Future Expansion

This foundation sets the stage for "Enterprising Minecraft - Phase 2," where I'll implement enterprise-grade security and automation. Planned enhancements include:

Hardening security with container image scanning

Building infrastructure as code with Terraform for reproducible deployments

Exploring multi-region strategies for high availability

Migration to managed Kubernetes services like EKS and GKE to understand serverless orchestration

Implementing automated cloud backup solutions

Each advancement deepens my cloud-native security expertise—directly applicable to enterprise environments.

08

Future Expansion

This foundation sets the stage for "Enterprising Minecraft - Phase 2," where I'll implement enterprise-grade security and automation. Planned enhancements include:

Hardening security with container image scanning

Building infrastructure as code with Terraform for reproducible deployments

Exploring multi-region strategies for high availability

Migration to managed Kubernetes services like EKS and GKE to understand serverless orchestration

Implementing automated cloud backup solutions

Each advancement deepens my cloud-native security expertise—directly applicable to enterprise environments.